Note to the reader: I wrote these notes mostly because I found the paper confusing the first two times I read it and I think it’s important to have a precise handle on different arguments around the state of technology and research.

Bloom, Jones, Van Reenen, and Webb ask “Are Ideas Getting Harder to Find?”

Spoiler alert: Yes.

The paper also asks “Are scientists becoming less productive?” To which the answer is also yes. For a very specific and non-intuitive definition of ideas. Understanding this paper is all about grokking definitions and the context of its arguments. At the end of the day the paper is pushing back against the endogenous growth model of economics that assumes that a fixed number of scientists should be able to generate a constant rate of exponential growth.

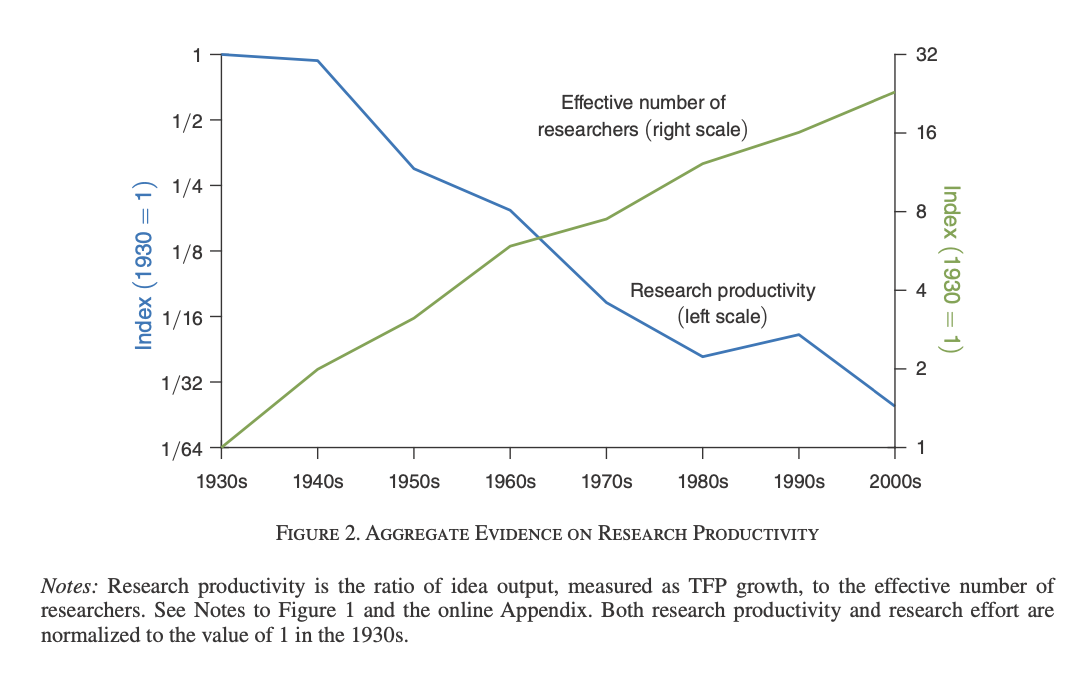

In all fairness, the authors do note in the middle of page five that a more accurate title might have been Is exponential growth getting harder to achieve? Unfortunately it is buried so deep that it doesn’t absolve the suggestions of graphs like the one below, which unless you understand the embedded assumptions about ideas, amounts to “wow, scientists today suck compared to the 1930’s. I mean, Einstein and Bohr were pretty badass, but all the 1930’s scientists taken together were 30x better than 2000’s scientists?”

According to the paper, in growth economics an idea is defined as a thing that creates a constant exponential rate of growth. So by definition if the growth rate goes down in a year, the number of ideas that were created that year is also lower. The model treats scientists as miners for these ideas. Accordingly, their productivity is ideas produced per scientist per year. Intuitively, someone’s productivity is the number of widgets they can create per unit time. So measuring a scientist’s productivity in ideas per year seems … reasonable. However, because of how we’ve defined ideas, if the economy’s growth rate stays constant and the number of scientists has gone up, that means that those scientists have become less productive because the number of ideas per scientist has gone down. In other words, if a fixed number of scientists cannot create a constant exponential growth rate in the value of all the widgets being produced, they are becoming less productive.

The implied null hypothesis of endogenous growth theory is that a fixed number of constantly productive scientists should be able to output enough science to keep an economy growing exponentially at a constant rate forever. This is, in my opinion, absurd.

“Hey couple hundred folks doing science at the end of the 19th century - y’know if you don’t keep the economy growing at the same rate you’re slacking on the job.”

I have very little understanding of traditional endogenous growth economics so I wonder if this is a gross misinterpretation of its null hypothesis. There are several other important points that are important for thinking about this area that aren’t part of the paper and thus I won’t dig into here. One is the usefulness of GDP and the TFP derived from it as metrics for progress - especially in research. Another is the assumption that the equilibrium state of GDP is to increase at a constant rate, which underlies the definition of ideas. But these are other discussions for other times (and frankly I don’t understand them well enough to weigh in.)

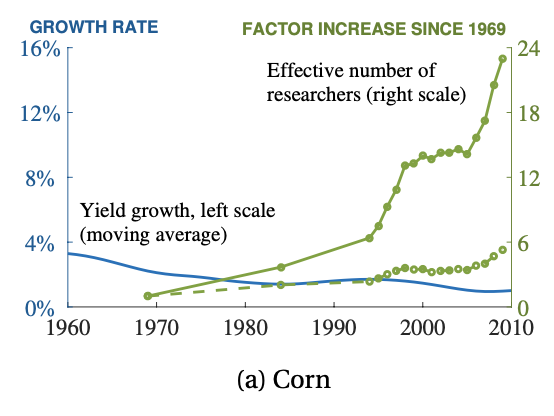

The rest of the paper makes a lot of sense if you view it through the lens of providing evidence that throughout the economy, a constant number of researchers are unable to discover and invent enough to maintain a constant exponential growth rate. That isn’t to say that most areas haven’t been able to maintain a constant exponential growth rate, though. In my mind, “ideas getting harder to find” leads to “there are fewer ideas to be found” which would imply slowing or stagnant growth. This is the case in a few areas that the authors examined, but in many areas there’s been a lot of progress! It feels unfair to compare constant growth rates to absolute numbers of researchers.

You see this graph and think “doooooooooom!”

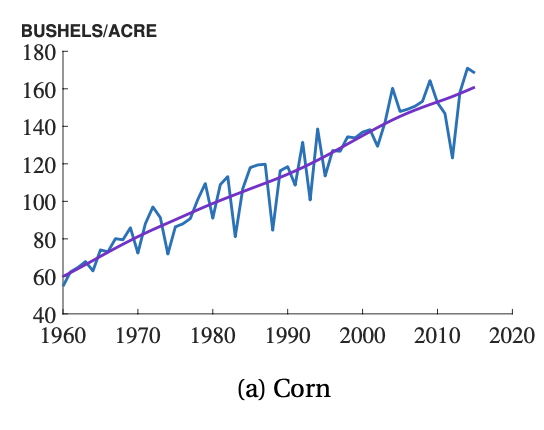

But then you see this graph and it’s like “Wow, we’ve managed to almost triple the number of bushels of corn an acre of land can produce - that’s awesome!

I realize that it’s not completely fair to critique a paper’s emotional impact but at the end of the day we are not creatures of pure logic and subconscious optimism or pessimism really has a big impact.

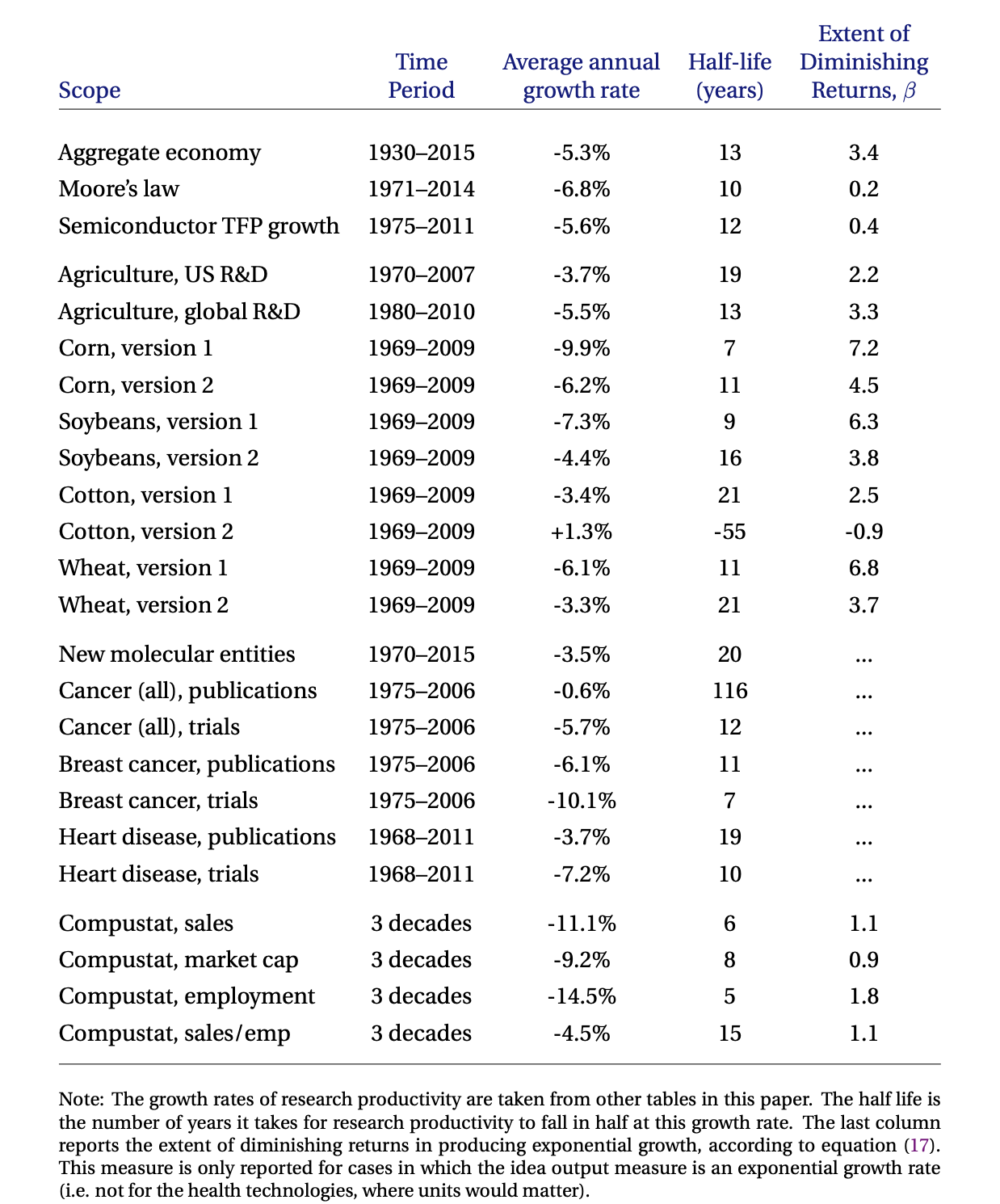

Most of the paper is devoted to hammering home the point that we can’t get exponential outputs with constant inputs. Near the end is a great table where they discuss how fast the inputs need to grow in order to get those exponential outputs.

That β in the far right column is basically the rate at which scientists are ‘becoming less productive’ or rather, the rate at which the number of scientists needs to grow to maintain a constant growth rate in that area. Note that Moore’s law is actually doing pretty well. But not nearly as well as Cotton, version 2! Wow!

That β in the far right column is basically the rate at which scientists are ‘becoming less productive’ or rather, the rate at which the number of scientists needs to grow to maintain a constant growth rate in that area. Note that Moore’s law is actually doing pretty well. But not nearly as well as Cotton, version 2! Wow!

I (independently of the authors) have two ways of interpreting these β values. One way of looking at this chart might interpret high β values as dying or stagnant fields where not much improvement is possible. On the other hand, they might also suggest that because many weird and ambitious people have the former opinion, there might be opportunities for radically new paradigms that haven’t been uncovered. I (perhaps stupidly) like the latter.

The primary point of this paper is not to make a strong claim about the vague sense many people have that we aren’t discovering or inventing as many amazing things as we used to. It is pushing back against the endogenous growth model of economics that assumes that a fixed number of scientists should be able to generate a constant rate of exponential growth. So it’s less “all you people are silly for expecting flying cars” and more “all you economists are silly for using unrealistic assumptions that make your math work.”

Hyperbole aside, the paper does have an important upshot: our null hypothesis should be that exponential progress is going to require exponential inputs and specifically exponential numbers of researchers. Scott Alexander does an excellent job arguing that logically, this should be the case.

All things held equal, that paints a picture where we first train the entire human population to be researchers in order to maintain growth and then because human population is not growing at an exponential rate, growth stagnates anyway. That’s a bleak vision. In the short term, it underscores the importance of enhancing research productivity any way we can. I would argue that many trends have been moving the opposite direction of more research productivity. But, as Scott notes, it’s a bit absurd to think that we could make researchers exponentially better through better institutions alone. The beacon of hope, as he notes, is to decouple exponentially increasing research inputs from the need for exponentially increasing legions of researchers. The analogy I like is to nitrogen-based fertilizers. Before the Haber—Bosch process, nitrogen-based fertilizer (and thus crop output) was coupled to the supply of organic sources of nitrogen - especially bat guano. Supporting an exponentially growing population required an exponentially growing food supply and thus an exponentially growing supply of guano. People literally fought wars and killed each other over bat poop. Given everything that he knew, Malthus was absolutely right - the way everything was going, the world was going to run out of guano, and thus fertilizer, and thus food, resulting in mass starvation. The Haber—Bosch process pulled nitrogen out of the effectively infinite atmosphere, decoupling nitrogen from organic sources. We didn’t stop needing exponentially more nitrogen, it just no longer required exponentially more guano.

Can we replicate the Haber—Bosch process for research? I don’t know. Scott suggests that AI might be be the equivalent of the Haber—Bosch for research. I hope he’s right, and if he is, it suggests that it’s important to work on automated research that doesn’t require full general AI. 1

At the same time, it’s also worth asking “what are implications of those different β values?” The paper is light on absolute numbers of researchers. It’s a very different scenario if we need the entire world’s population to do research within a generation vs. hundreds of years. A quick Google search suggests that there are about 8 million researchers in the entire world. According to the data in the paper, the number of researchers needs to double every 17 years to maintain constant growth rates. That means that in 2037 we would need 16 million researchers, in 2054 we’d need 32 million, and in 2071 we’d need 64 million. Generously assuming a constant population, that’s 1% of the world’s population in 2071 doing research to maintain 20th century growth rates. Assuming the doubling rate of growth-maintaining researchers doesn’t accelerate (which unfortunately seems possible) those numbers suggests that while we need to break the researcher-idea production coupling, we’re not in a doomsday scenario. At the same time, if you buy into the importance of science-driven growth, 2 it is clear that we can’t continue doing things exactly as we have in the past.

If you’re interested in this topic, some other things worth reading

- Scott Alexander’s Is Science Slowing Down?

- Patrick Collison and Michael Nielsen’s Science is Getting Less Bang for its Buck

- Jay Bhattacharya and Mikko Packalen’s Stagnation and Scientific Incentives

- Tyler Cowen and Ben Southwood’s Is the rate of scientific progress slowing down?

- Jason Crawford’sTeasing Apart S-Curves

(Very open to other suggestions to add to this list!)

Thanks to Martin Permin, Luke Constable, and Cheryl Reinhardt for feedback and suggestions.

-

There has been some cool work on this subject, but it feels underdeveloped compared to the brunt of AI research. ↩

-

Full disclosure: I do think science-driven growth is important. My reasoning follows the lines of Tyler Cowen’s arguments in Stubborn Attachments and Peter Thiel’s arguments around the relationship between growth and violence. ↩

If you enjoyed this post, please share!